High-Level Architecture

Introduction

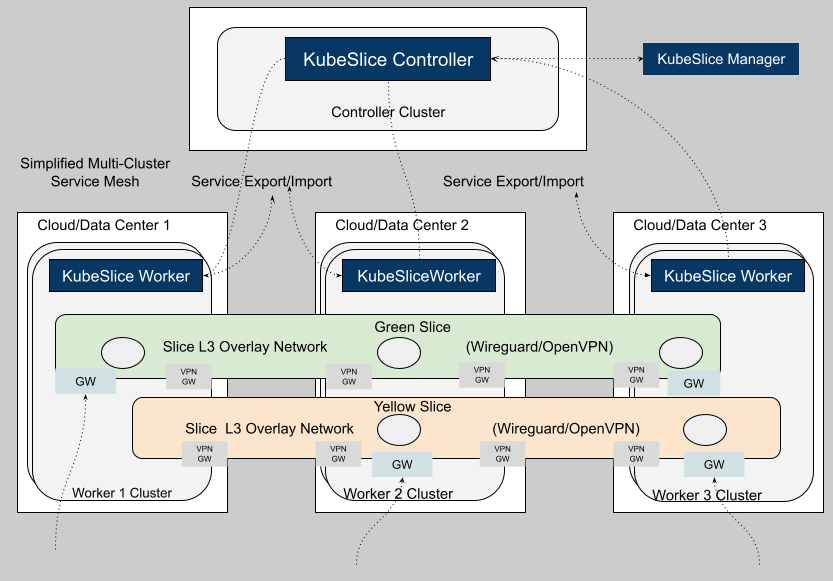

KubeSlice Enterprise is a simplified multi-cluster service mesh platform. KubeSlice simplifies multi-cluster/multi-cloud service discovery and connectivity. Provides multi-cluster networking for multi-cloud, edge, cloud, hybrid-cloud, and bare-metal Kubernetes clusters. It is a vendor-neutral, extensible framework for building flat overlay networks across heterogeneous Kubernetes clusters.

KubeSlice enables and simplifies pod-to-pod communications for L3-L7 protocols across a fleet of clusters by using a construct called Slice. Each slice can be associated with a set of clusters with varying topologies and can be associated with one or more namespaces in each cluster. The pods in the slice namespaces can reach each other over the slice-specific flat overlay network. It can also be described as an application-specific VPC that spans across clusters. KubeSlice allows creating multiple slices across clusters, with each slice having a dedicated set of namespaces in each associated cluster. It enables easy segmentation and isolation of applications using slices. It enables namespace consistency across the associated clusters within a slice. KubeSlice brings with it software-defined, highly available, and secure connections across clusters using VPN gateways.

KubeSlice can also be used to enable service discovery and reachability across clusters. KubeSlice enables service discovery across the slice using Service Exports and Imports in each cluster. A slice-associated namespace service running in a cluster can be exported over the slice overlay network so that it is discovered and reached by pods running in other clusters associated with the slice. SliceDNS in each cluster can be used for FQDN-based inter-cluster service-to-service communications across the slice. Slice DNS has service entries with overlay network IP addresses for service endpoints.

The KubeSlice Enterprise architecture consists of several components that interact with each other to manage the lifecycle of the slice overlay network. The diagram below shows the primary components of KubeSlice Enterprise and the connections between them.

The following is a high-level overview of the architecture components. Each component is described in more detail in the subsequent sections.

Architecture Components

The primary components of the KubeSlice architecture include:

Controller Cluster

The Controller Cluster is the central management plane for the entire KubeSlice Enterprise deployment. It hosts two tightly coupled components:

-

KubeSlice Controller: The KubeSlice Controller is the primary orchestration engine in the Controller Cluster. It manages user configuration and orchestrates the creation and lifecycle of slice overlay networks across all connected worker clusters. It owns and manages a set of Custom Resource Definitions (CRDs) that store configuration and operational state for all slices, gateways, namespaces, and policies. Worker clusters connect to the Controller Cluster's Kubernetes API server to fetch their slice configuration from custom resource objects. The KubeSlice Controller also coordinates Service Export and Import operations, propagating service discovery information bidirectionally across all participating worker clusters.

-

KubeSlice Manager: The KubeSlice Manager is the enterprise management UI and API layer. It connects to the KubeSlice Controller and provides a centralized interface for administrators to manage slices, onboard clusters, configure network policies, and monitor the health of the multi-cluster service mesh. The KubeSlice Manager translates operator intent into objects that the KubeSlice Controller acts upon.

-

Observability and Telemetry: The Controller Cluster also hosts observability components that collect and aggregate telemetry data from all worker clusters. This includes metrics and events related to slice performance, network traffic, and resource usage. The KubeSlice Controller uses this telemetry data to provide insights into the health and performance of the multi-cluster service mesh, enabling proactive management and troubleshooting. You can view the telemetry data in the KubeSlice Manager (UI) for real-time monitoring and historical analysis of your multi-cluster environment.

Worker Clusters

Worker clusters are the Kubernetes clusters that participate in one or more slice overlay networks. Each worker cluster runs an KubeSlice Worker and one or more Slice Gateways.

In the architecture diagram, three worker clusters (Worker 1, Worker 2, and Worker 3) span across three separate cloud or data center environments (Cloud/Data Center 1, 2, and 3).

-

KubeSlice Worker: The KubeSlice Worker is the principal component deployed in each worker cluster. It is the enterprise counterpart of the open-source Slice Operator, which extends its capabilities with additional policy enforcement, resource governance, and enterprise observability features.

The KubeSlice Worker performs the following functions in its cluster:

- Connects to the Controller Cluster's Kubernetes API server to fetch slice configuration.

- Provisions and manages Slice Gateways (GW) for inter-cluster VPN connectivity.

- Programs routing rules to funnel traffic between application pods and gateway pods.

- Registers and synchronizes Service Exports and Imports with the KubeSlice Controller.

- Enforces QoS profiles, RBAC policies, and resource quotas propagated from the Controller Cluster.

-

Slice Gateways: Each worker cluster that participates in a slice can have one or more Slice Gateway (GW) pods. There can be multiple types of gateways associated with a slice:

- Slice VPN Gateways

- Slice Ingress/Egress Gateways for east-west traffic across the slice

- Slice Ingress Gateways for north-south traffic from external sources

- Slice VPC Ingress/Egress Gateways for securely accessing cloud resources from local/remote worker clusters over the slice overlay network

Slice VPN Gateways establish encrypted tunnels between clusters using either WireGuard or OpenVPN as the VPN protocol. Gateways are provisioned per slice, providing network isolation between different slice overlays running on the same set of clusters.

Simplified Multi-Cluster Service Mesh

KubeSlice Enterprise implements a Simplified Multi-Cluster Service Mesh across all connected worker clusters.

This service mesh provides:

- L3 pod-to-pod connectivity across cluster boundaries via slice overlay networks.

- Service Export and Import for automatic service discovery and cross-cluster reachability.

- Slice DNS for FQDN-based inter-cluster service-to-service communication.

- Network segmentation and isolation between tenants using separate named slices.

The KubeSlice Controller centrally manages the service mesh, eliminating the need for a service mesh control plane in each cluster, which significantly reduces operational complexity.

Slice Overlay Networks

KubeSlice Enterprise supports multiple named slice overlays running concurrently across the same set of worker clusters. Each slice defines an independent L3 overlay network with its own subnet, VPN tunnels, gateways, and namespace associations.

The architecture diagram illustrates two example slices:

-

Green Slice: The Green Slice is a Layer 3 overlay network that spans all three worker clusters. It uses WireGuard or OpenVPN to establish encrypted tunnels between the gateway pods in each cluster. Application pods in the Green Slice namespace can directly communicate over the flat overlay network using private overlay IP addresses.

-

Yellow Slice: The Yellow Slice is a separate Layer 3 overlay network that operates on a subset of the worker clusters. It utilizes either WireGuard or OpenVPN for its functionality. It is fully isolated from the Green Slice: pods in the Yellow Slice cannot communicate with pods in the Green Slice, even on the same worker cluster, unless explicitly permitted through service export policies. This isolation enables multiple teams or applications to share the same physical clusters while maintaining strong network segmentation.

Service Export and Import

The Service Export/Import mechanism facilitates service discovery across clusters within a slice, eliminating the need for external load balancers or manual DNS configuration. When a service is exported from a namespace in one worker cluster, the KubeSlice Controller distributes the corresponding DNS entry to all other worker clusters participating in the same slice. Pods in remote clusters resolve the service's FQDN through Slice DNS, which maps it to the overlay network IP of the exported service endpoint.

Summary of Key Components

| Component | Location | Role |

|---|---|---|

| KubeSlice Controller | Controller Cluster | Central orchestration of slice lifecycle, CRD management, and Service Export/Import coordination |

| KubeSlice Manager | Management Plane (UI/API) | Enterprise UI and API for cluster onboarding, slice configuration, and operational visibility |

| KubeSlice Worker | Each Worker Cluster | Per-cluster agent that provisions gateways, enforces policies, and syncs configuration from the Controller Cluster |

| Slice VPN Gateway (VPN GW) | Each Worker Cluster | Per-slice VPN gateway pods that establish encrypted WireGuard or OpenVPN tunnels between clusters |

| Slice Ingress/Egress Gateways (GW) | Each Worker Cluster | Per-slice Ingress/Egress gateways for east-west, north-south, vpc access traffic patterns. |

| Slice DNS | Each Worker Cluster | Provides FQDN-based resolution of exported services across slice boundaries |

| Green Slice / Yellow Slice | Across Worker Clusters | Slice with associated namespaces, with L3 overlay networks providing isolated pod-to-pod connectivity for different applications or tenants |